The rapid pace of updates and upgrades to operating systems, software frameworks, libraries, programming language versions – a boon to the future of fast-paced software development, has also come to slightly bite us in the back because of having to manage these very many dependencies with their different versions across different environments.

This usually manifests in the form of two major difficulties – (i) keeping in sync all the aspects of your development, testing, staging, and deployment environments with your application’s requirements, and (ii) ensuring compatibility between the different frameworks, libraries, and other components of your applications. This represents a significant share of time, energy, and resources that are wasted just ensuring that software runs predictably.

It is for this reason that Operating system (OS) and server virtualization has gained so much popularity in the last decade. Allowing multiple applications and their components to be isolated, yet consolidated onto one single system, and being able to reliably move them from one environment to another, has gone a long way in accelerating software application development and deployment. One such virtualization technology that has been taking the software industry by storm is Docker containers.

Even though the container’s technology has been around for long, it was only with the launch of Docker in 2013 that containers became popular and mainstream, revolutionized the way software was built and shipped, and started the cloud-native development bandwagon.

In this post, we will do a deep dive into Docker containers. Before we explore Docker and its very many functionalities, it is important to understand the fundamentals of containers – what they are, the problems they solve, how they work under the hood, and how they differ from other virtualization methods. And that is where we will start, before diving into the Docker ecosystem – its architecture, components, commands, and workflow. Consider this post as a one-stop guide for an overview of everything you need to know about containers and Docker!

Here’s a preview of what we’ll be covering so you can easily navigate or skip ahead in the guide–

- An Overview of Containers

- What is Docker?

- Containers vs. Virtual Machines

- Docker’s Architecture, Components, and Commands

- Advantages of using Docker Containers

- Wrapping Up Our Containers

An Overview of Containers

Before we understand what containers are and how they contribute towards cloud-native development, let’s try to understand some hardships that have pestered developer and operation teams over centuries – issues that containers have readily been able to solve.

Though there are very many ways in which containers can make our lives easier, to get a gist of how useful they can be, let us discuss two common scenarios that effectively demonstrate the ease and convenience that containers add to our software building pipelines.

A Life without Containers

Let us take a look at some aspects of non-containerized application development and deployment practices without containers.

Application Development without Containers

Development teams comprise individuals with different personal computers – different operating systems, different programming language versions, different library and framework versions, and different system configurations.

As you can imagine, this can make it quite difficult for everyone to smoothly collaborate – at least in the beginning, when installing multiple services for multiple applications for the first time. This is quite common when these teams onboard new developers, who might need help in setting up their respective development environments. These environment mismatches and corresponding efforts to maintain a consistent environment for everyone can continue to affect development routines even further down the line.

Before we dive into a (now ubiquitous) solution for this relatively outdated development practice, let’s look at application deployment with non-containerized software development pipelines.

Application Deployment without Containers

Similarly, before the ubiquity and popular use of containers, most organizations used to deploy applications in a painstakingly difficult fashion. Developer teams after having prepared the code to be shipped would pass on the applications’ code files, along with installation and setup instructions for dependencies and other configuration parameters to the operations team.

The operations team would then follow these instructions, install the various dependencies, and attempt to prepare the requisite setup on the production server. However, often the production server would be a completely different environment compared to the one used for development. This would lead to conflicts caused by mismatched dependency versions, compatibility issues, and other misunderstandings that the operations team would less likely be able to resolve on their own.

If your application’s test suites are not thorough enough, many such issues are also likely to bleed into production and make things much worse. As you can see, this is not the most efficient or optimal way of delivering robust web applications. It is prone to error and can lead to wastage of time, effort, and other resources valuable to your organization.

Oh, how nice it would have been if only there was a way to have all of these components of your application’s environment packaged into one lightweight, easily portable, secure bundle, that everyone – including developers, operators, and administrators, could instantiate on their own machines by running just one command!

Well, guess what? This is exactly what containers let you do!

What is a Container?

A container is an isolated run-time environment, with its own set of processes, services, network interfaces, dependencies, and configurations – all of which are packaged into one standard unit of software. To put it simply, a container includes everything that is needed to run an application – code, runtime, system tools, system libraries, and settings.

It is a secure, lightweight, standalone, portable, executable package that even though is isolated from other containers and the host’s environment, can make shared use of the host system’s OS kernel. The host here refers to the machine on which the containers are being run.

These containers can be used to house and run individual services (or full-fledged applications) separately with their own dependencies – undisturbed by any differences in the underlying host’s or other containers’ environment configuration. This isolation is especially important because developers, when building applications, need to coordinate multiple services (eg. a database, a web server, load balancer, etc.) across one or more applications. Without containerization, these components are likely to share and interfere with each other’s dependencies and therefore, can occasionally make your application(s) vulnerable to breaking functionality.

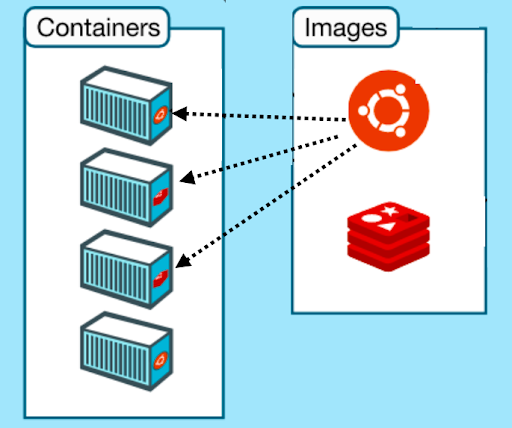

Equally important is how containers allow you to easily, and reliably move your applications and their services around different compute environments, as individual entities – instead of as bulky blobs with their installation instructions (or scripts) and dependencies baggage. These sleek containers package all your application contents smoothly into individual images. These images can be instantiated as separate containers as many times as you like, using just one command. We’ll look at what images are, and how you can create one, a little later in this post. For now, you can think of them as a container template that stores information about your container’s setup.

That’s quite a lot of information about containers to package into one section. Don’t worry, we’ll revisit all the important aspects of containers throughout the post to give you a well-rounded understanding of containers, how they are created, how they work, and their numerous benefits.

Let us now revisit the issues we faced in application development and deployment without containers (in the previous section), and see how much easier things are with containers.

How do Containers Help?

Ease of Application Development with Containers

Previously, in this post, we got a sense of how difficult it can be to keep everyone to be working on the same development setup, and more importantly – to set up exact development environments for newly onboarded developers. We discussed how this impedes collaboration and results in wastage of time and resources that would otherwise be better spent building new features.

With containers, however, setting up development environments is as easy as acquiring a predefined image (with all its requirements) and using just one command – to instantiate and run containers as isolated application services that don’t interfere with each others’ dependencies, and are capable of working together to serve as a complete, fully functional application.

Thanks to this, organizations can now prepare their own, custom container images, which can be used by their developers to get started with the requisite development environments.

Ease of Application Deployment with Containers

As with application development, deployment also is now much easier to streamline with the help of containers. We saw previously, how without containers, developers had to share with the operations team the application code, along with installation and instructions – that needed to be carefully followed to set up the application in the production environment.

With containers now, developer and operation teams can work together to create these container images that can be easily shipped and seamlessly instantiated on your servers in the cloud. Apart from the reduced number of installation/setup commands, these containers help by allowing you to house each application service into its own little world, with its ideal set of requirements – and therefore reduce any chance of any dependency mismatches and conflicts.

Apart from shipping web applications to production servers, containers can also be used to share full-fledged or individual software applications over the internet. This is also quite common in popular open-source projects you might come across on Github, where links to Docker container images of application components are shared for developers to pick up and instantiate on their machines. Many hundreds of thousands of such Docker container images can also be found on Docker Hub to play around with.

Application development and deployment pipelines are two common, easily observable use cases of containers that we have covered in this post. However, the advantages of using containers cover a wider expanse that we will explore in a later section. Before that, let’s talk about the key player here, that is – Docker – what it is, how it lets you create and manage containers, and how it works under the hood.

What is Docker?

Docker is a software platform that lets you develop, build, ship, manage and run containerized applications.

Containers, as a technology, have existed long before Docker, but Docker is what has made them ever so popular – and more importantly, approachable. If you really want, you can set up your own container environments from scratch in Linux. However, we all can imagine how difficult that’s going to be because of how low-level these things are. This is where Docker comes to the rescue. It takes care of all the underlying functionality and low-level implementations and exposes a high-level API that is light-weight, robust, flexible, and intuitive, making it super easy for end-users to work with containers. With Docker, you can pull the container image, build it, and run it – all using just one command.

All of this is made possible thanks to the Docker Engine, which forms the core of the platform. The Docker Engine is the underlying software that utilizes your machine’s (also known as the Docker host) OS kernel for creating and running these containers. Docker started with only Linux-based systems, but since 2016 also supports Windows and Mac operating systems.

Docker’s Rise to Success

One of the most important aspects of application development that Docker solved for developers was the ever so common issue of the application running fine on your machine, but somehow breaking when shipped to the server. In 2013, Docker was launched as an open-source project that leveraged existing container technology for creating and managing containers. Ever since then, Docker has single-handedly revolutionized the way software is built, packaged, delivered, and deployed forever. Besides, Docker’s launch coincided with the DevOps revolution, allowing both parties to complement each other and aid and accelerate software delivery pipelines.

By using a simple feature in Linux that has been there for years and turning it into a cloud service of its own, Docker’s CEO hit the nail right on its head on behalf of the technology market.

How Docker Containers Work

It might be tempting to start playing around with Docker already. But before we cover all Docker components (eg. Dockerfile, Docker Hub, images, etc.), its installation, commands, and other details in a later section, let’s look at how these Docker containers work under the hood.

Revisiting Operating Systems

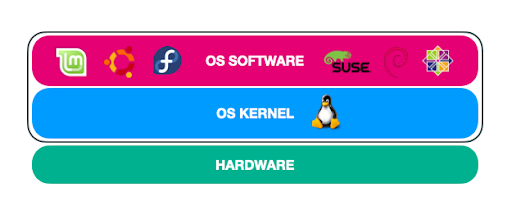

Operating systems comprise two layers on top of the hardware layer – the OS kernel layer (eg. Linux) and the OS software (eg. Ubuntu, Fedora, etc). The OS kernel is the core of the operating system. It is what interacts with your machine’s hardware and facilitates its communication with the software components. On top of this kernel, resides the OS software. This comprises the operating system’s UI, set of drivers, compilers, file managers, applications, tools, etc. It is this software that makes your OS stand out (among peers with the same kernel).

If we talk about the Linux ecosystem, all Linux OSes share a common Linux kernel, on top of which various customized software distributions like Ubuntu, Fedora, CentOS, etc. have been developed.

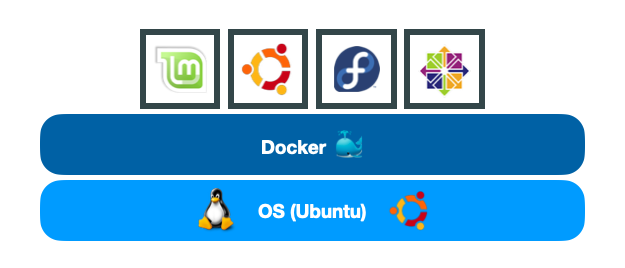

Containers share and utilize the kernel

When you use Docker on your machine, it is the OS kernel that it (shares and) utilizes to instantiate new containers. Therefore, Docker allows you to create containers with OS softwares that are supported by the underlying OS kernel. For example, on a Linux machine, you can (conventionally) only create containers of Ubuntu, Fedora, CentOS, and other OSes with Linux kernels. This allows each container to only have to store the extra contents of the OS’s software, instead of the whole kernel. This is what makes containers extremely lightweight and therefore, easier to move around and work with.

Example of supported OS distributions with Linux kernel

Note: This however does not mean that you can’t run a Linux container on a Windows or macOS machine. You can, though that happens with the help of a Linux Virtual Machine (VM) running on top of your Windows/Mac OS that provides the kernel and runs the containers.

More about Containers

These containers live only as long as the process inside them are alive. Once the container’s task (or process) stops, fails, crashes, or completes, the container stops, and exits. The fact that containers can’t conventionally (or natively) run different operating systems might sound like a disadvantage. But it is not – because that’s not what containers are for. Containers are not meant to run operating systems. They are meant to run specific tasks and processes, for example, to host instances of web servers, databases, application servers, or for running generic computational tasks.

This is why containers are often confused with and contrasted against VMs, which for most purposes, are their bulkier, overkill counterparts. Let’s compare the two.

Containers vs. Virtual Machines

Each Virtual Machine utilizes its own complete, separate OS (kernel + software) image. This turns out to be quite an overhead in terms of memory and storage footprint. As we discussed before, containers do not require the whole OS or the hypervisor; they share the kernel and store just the extra software contents that differentiate it from other OSes (with the same kernel). This makes the containers extremely lightweight, being just about a few megabytes (MB) in storage size, as compared to sizable VMs that take up gigabytes (GB) in storage.

Containers (left) vs. Virtual Machines (right) [Source]

For many of the web services and processes that organizations wish to isolate, VMs turn out to quite an overkill, leading to unnecessary wastage of resources. Yes, we did want some isolation between the different applications and their components, but not so much! Besides, we would like these virtualization solutions to be portable – a checkbox that containers easily tick off, unlike heavy VM instances.

Let’s compare VMs and containers in specific aspects of performance:

- Memory / Resource Utilization: In the case of VMs, the memory and resource utilization is much higher because there are multiple virtual OSes (with their kernels) running in parallel. Containers, on the other hand, share the kernel and therefore consume fewer resources.

- Disk space: Similarly, VMs consume more disk space (in GBs) and are much heavier because of having to store a complete copy of the OS for each VM instance, unlike containers (in MBs).

- Booting speed: Because of the above two factors, VMs take much longer to boot up (a few minutes), as compared to containers, which can boot up in a few seconds.

However, regardless of what these metrics suggest, it’s not fair to take sides here. VMs have their own advantages in running multiple different OSes on different machines. Containers and VMs serve different purposes. And it is for this reason that you’ll find that in the industry, both are used in conjunction (as shown below), rather than one replacing the other.

Containers with Virtual Machines [Source]

Docker’s Architecture, Components, and Commands

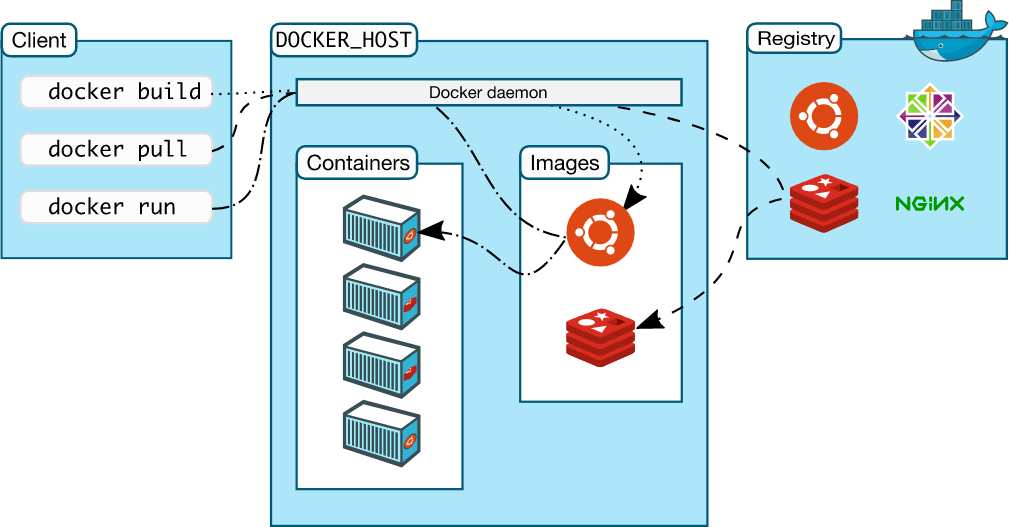

Now that we have gotten some understanding of the internals of containers, let’s discuss some of the primary functionalities of Docker – its architecture, its components, and what it enables us to achieve with containers through its wonderful API.

Docker Engine

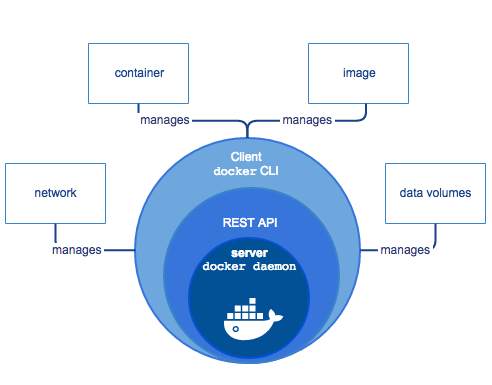

The Docker Engine is the client-server application that makes all of this containerization possible. It consists of three layers:

- Docker CLI [Client]

- REST API

- Docker daemon [Server]

[Source]

The CLI is the command-line interface we use to send commands to and get things done from the Docker daemon, through the intermediate REST API. However, there is also the option to communicate with the API through your own applications. This client can be on the same machine as the daemon (server), or even on a remote instance.

The daemon is what forms the core of Docker. It is what takes care of creating, building, and managing all the containers, their images, network interfaces, mounts, etc. on your machine. Now let’s look at the Docker commands that can be used to build and run containers.

Docker Image

A Docker image is a read-only template that includes information about the containerized application, service, or process. When we talk about shipping and deploying containers, we are actually referring to the portability of the Docker images – that are eventually instantiated as containers. These images can be used to create as many containers as you like.

One image to multiple containers [Source]

You can create your own custom images by specifying the instructions and other configuration parameters in a Dockerfile, or you can use pre-built images from Docker’s public repository of containers, Docker Hub. We’ll be covering both of these in subsequent sections.

Docker Hub

Docker Hub is a public repository for the community that can be used to pull (download) pre-built container images of official applications, open-source projects, and unofficial pet project containers uploaded by the general public. You can use the hub to host your own container images (public or private) or easily pull to your instance images of popular applications and services. We’ll see this in action when we use the docker run command.

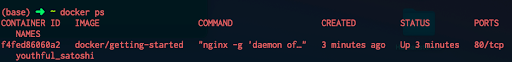

Docker run and docker ps

Assuming you have Docker installed, once you have the image ready (locally or remotely), you can use the docker run command for creating a container from the image. Let’s try this by running the docker/getting-started container on our machine –

docker run docker/getting-started

When you run the above command, Docker will pull the above image from Docker Hub onto your machine, and run a container using it.

docker run command

We can visualize running Docker containers through the CLI using the docker ps command or using Docker’s Desktop application.

docker ps command for outputting running containers

Once the image has been downloaded, it will be cached on your system for future use.

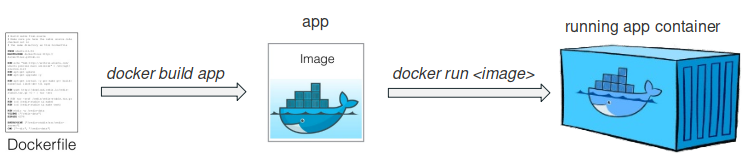

Dockerfile

What if you wish to containerize a custom application? This can be done using a Dockerfile. The Dockerfile is a YAML file that contains configuration information and instructions about the Docker image that you want to build, in an easy-to-read and understand format.

It stores information like the OS image to be used, environment variables, libraries and dependencies to be installed, network configurations, mounting options, and other terminal commands that are required to build an image.

We’ll not be going into the details of Dockerfiles in this post, but here’s an example of a basic Dockerfile:

FROM Ubuntu

RUN apt-get update

RUN apt-get install python

RUN python3 -m pip install flask

COPY . /opt/my-code

ENTRYPOINT FLASK_APP=/opt/my-code/app.py flask run

docker build

[Source]

After you have created your image’s requisite Dockerfile (in a separate folder), you can build your Docker image using –

docker build . -t my-test-app

Now your custom Docker image is ready. You can run this image using the docker run command, and you are all set.

This was a brief overview of how you can create, build, and run Docker images and containers.

Below is an image from Docker’s documentation page that shows the overall functioning of the various Docker components. The Registry in the below diagram refers to any hosted cloud platform that stores and serves multiple Docker repositories. Docker Hub, for example, is a public registry. There are also other private registries that organizations can set up for their use.

[Source]

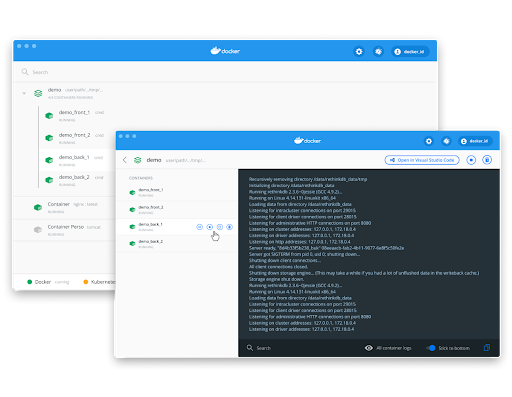

Docker’s Desktop Application

Docker also provides a neat Desktop application for Mac and Windows operating systems that provides a clean dashboard for visualizing and managing running container instances through an intuitive user interface.

Docker Swarm

So far, when talking about Docker containers, for the sake of simplicity, we were talking in terms of a single machine (Docker host) running one or more containers.

But in real-life applications, hundreds of such hosts with hundreds or thousands of such containers need to be set up to serve applications. Besides, there are a lot more factors to consider – user traffic, compute requirements, load balancing, scaling of these instances, and more. This brings in the need for tools that can orchestrate the overall functioning and management of clusters of such nodes that cater to thousands of user requests, scale-up and shrink based on the requirements, to compensate for failed nodes, etc. This is where container orchestration tools like Docker Swarm and Kubernetes come into the picture.

To put it simply, Docker Swarm runs on top of multiple Docker hosts to orchestrate multiple containers on multiple machines. Kubernetes is another popular container orchestration tool. You can read more about the differences between Docker and Kubernetes in our Kubernetes vs. Docker blog post.

Advantages of Using Docker Containers

Now that we have learned so much about Docker containers, before we close, let us revisit the bullet points, along with some additional advantages of using containers.

Isolation

Docker containers provide an isolated execution environment where each application or each service can have its own share of libraries and dependencies that do not interfere with those of others on the same instance. This reduces the chances of dependency mismatches and other similar conflicts, allowing developers to rest assured of the requirements of each service being fulfilled.

Portability

Docker containers are extremely lightweight as compared to their hefty VM counterparts. This is because each container shares the OS kernel of the Docker host and only requires the extra OS software, instead of the whole OS with the kernel. These containers package all the required dependencies, and can run on any machine that runs Docker, without any compatibility issues. This makes these containers small, portable packages that can easily be moved around different environments without any storage or memory overhead.

Multi-Cloud Platform Support

Docker containers are now well supported and embraced by all major cloud platforms like Amazon Web Services (AWS), Google Cloud Platform (GCP), Microsoft Azure, OpenStack, Heroku, etc.

Version Control

Similar to Git repositories, you can version control your Docker containers, i.e. you can track successive changes, inspect differences, rollback to a previous version, and much more. This acts as a good safety net, while also providing lots of flexibility to effectively track changes and switch between different versions.

Scalability and Management

With the help of container orchestration tools like Docker Swarm and Kubernetes, it has become much easier to manage the scaling of your applications and management of node clusters that include thousands of hosts and containers.

Easier Development, Team Collaboration

We saw how containers can help developer teams collaborate better by getting everyone on the same software stack. This can accelerate your development pipelines and save a lot of time upfront that would otherwise have been spent setting up development environments from scratch and resolving compatibility issues.

Easy and Rapid Deployment

We also saw how shipping and deploying applications becomes much easier with Docker. DevOps here benefits a lot as developer and operation teams can work towards creating containers that bundle up all their mutual requirements into one easily deployable container, as opposed to manually setting up production environments on new servers.

Consistency: It Always Works, Everywhere!

By far the most important aspect of containers, and why they have been so widely accepted and adopted all over the world is the assurance that these containers provide – an assurance that if a container works on one system, it is going to work everywhere else. This reliability factor enables developers to look beyond just getting the software to run predictably, and engage in more productive aspects of application development, like improving performance and building new features – aspects that are more likely to matter in the grand scheme of things.

Wrapping Up Our Containers

We covered almost everything there is to know about containers – all the important parts at least. We learned about what they are, their internals, how they operate, and how you can work with them using Docker’s highly flexible and intuitive API. We looked at the various components of Docker and discussed the functioning of the Docker ecosystem. Towards the end, we recapitulated the many advantages of containers to get a sense of how useful containers can be. Now go ahead and play around with Docker containers – try running some images of your favorite applications from Docker Hub, or build ones of your own!

If you liked this post, also make sure to check out the 8 Things You Should Know About Docker Containers post on our blog, for a more in-depth understanding of Docker and its components.

Cheers! Happy coding!