New Release: Memory Bloat Detection

When your app is experiencing memory bloat - a sharp increase in memory usage due to the allocation of many objects - it's a particularly stressful firefight.

Slow performance is one thing. Exhausting all of the memory on your host? That can bring your application down.

Memory bloat is a scary problem: our 2.0 release of the Scout agent aims to track down memory hotspots as easy as performance bottlenecks.

Read on: I'll share what's hard about memory bloat detection, Scout's approach, and some further reading.

Why is memory bloat detection hard?

The approach today for tracking down memory bloat - using monitoring services that support it - is based on tracking the number of object allocations per-request.

We don't disagree with this foundation.

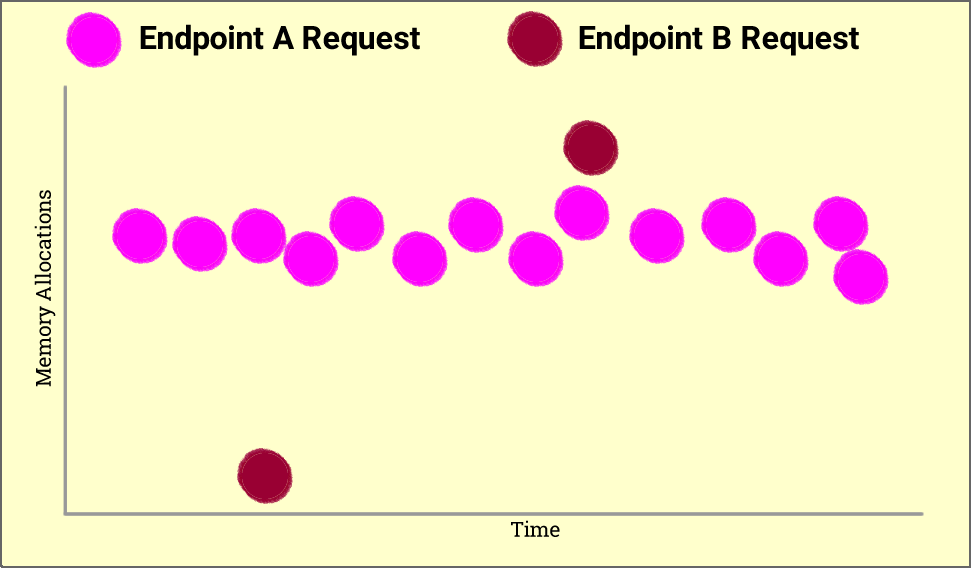

The problem? Take a look at this chart:

The chart above shows requests to two endpoints, Endpoint A and Endpoint B. Each circle represents a single request.

Which endpoint has a greater impact on memory usage?

If the y-axis was "response time' and you were optimizing CPU or database resources, you'd very likely start optimizing Endpoint A first. However, since we're optimizing memory, look for the Endpoint with the single request that triggers the most allocations. In this case, Endpoint B has the greatest impact on memory usage.

The problem with existing approaches for tracking down memory bloat is they treat memory allocations like timing metrics: reporting allocation means or percentiles. While this can help identify requests that are spending significant time in garbage collection, it doesn't help isolate areas of memory bloat.

Additionally, we think optimizing memory allocation metrics for GC timing is focusing on the less critical, less common problem: memory bloat can unexpectedly crash your app. Lots of time in GC will merely slow it down.

Memory is a high-water mark in Ruby: all it takes is a single, memory-hungry request for your app to plateau at a new memory usage mark. While Ruby does release memory, it happens so slowly, it's best to think of memory usage as a metric that has

Introducing Memory Bloat Detection

Our approach for memory usage is fundamentally different than timing metrics: it's all about peak memory consumption per-request, not means or percentiles.

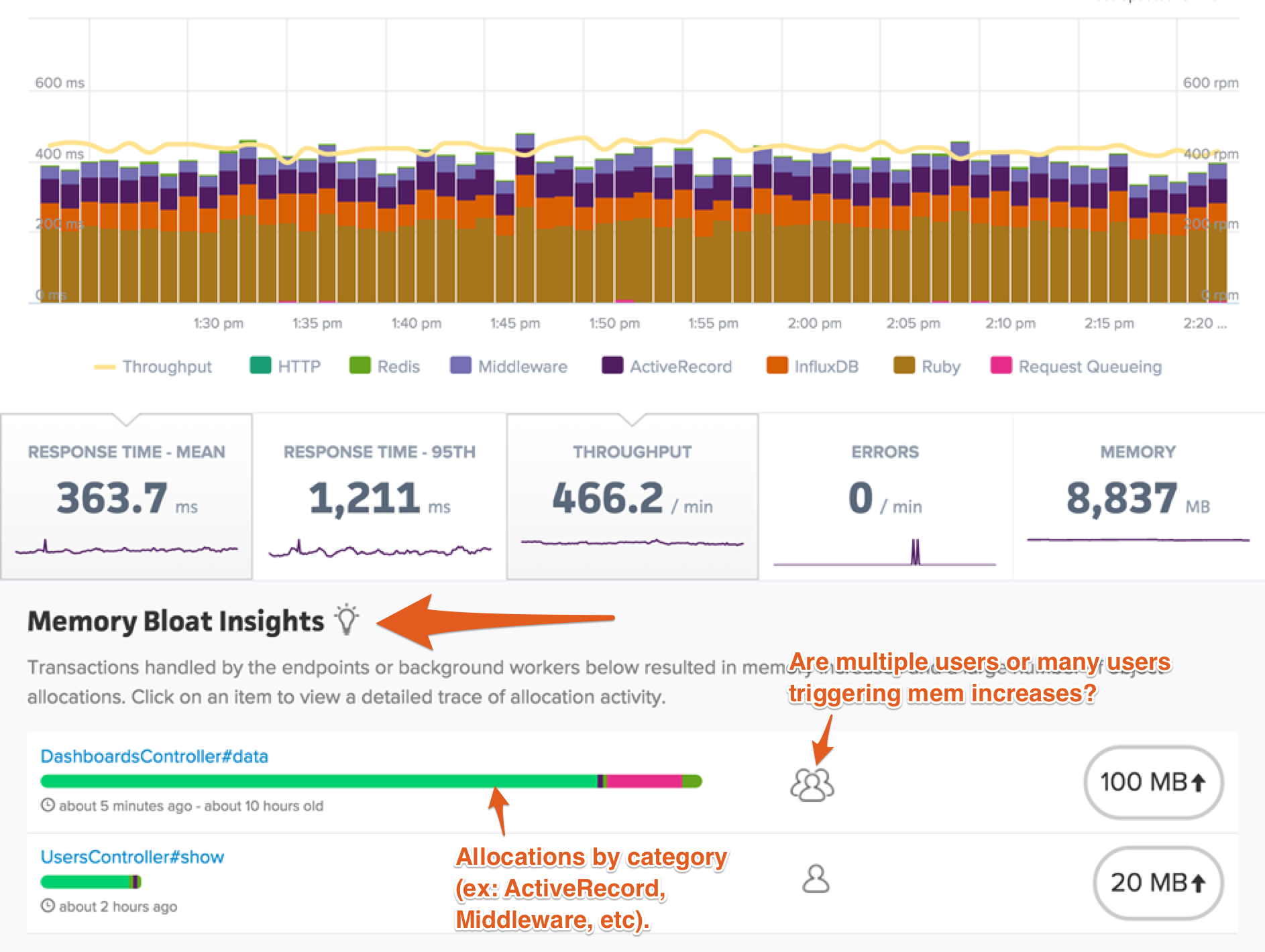

1. Memory Bloat Insights

On your application overview, there's a new "Memory Bloat Insights" section. This shows controller-actions and background jobs that triggered large memory increases. It takes the guess-work out of memory optimization.

2. Memory Traces

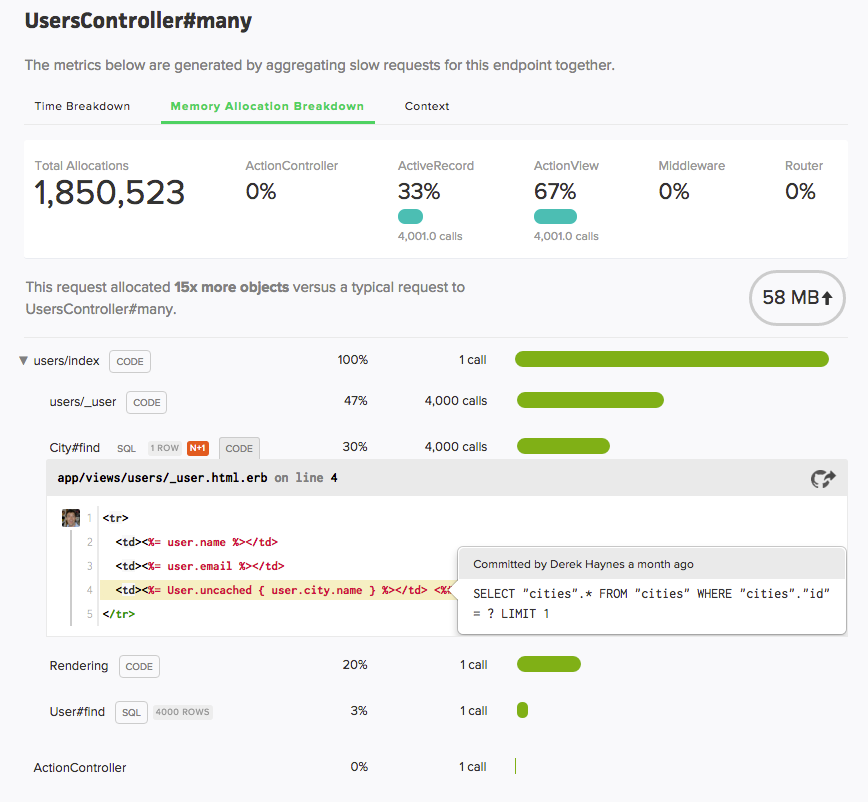

When inspecting a transaction trace, you'll see a "Memory Allocation Breakdown" section:

One detail I think you'll appreciate: memory allocation numbers always sound large.

Our integration with GitHub extends to memory metrics: not only can you see memory-hungry code right in your browser,

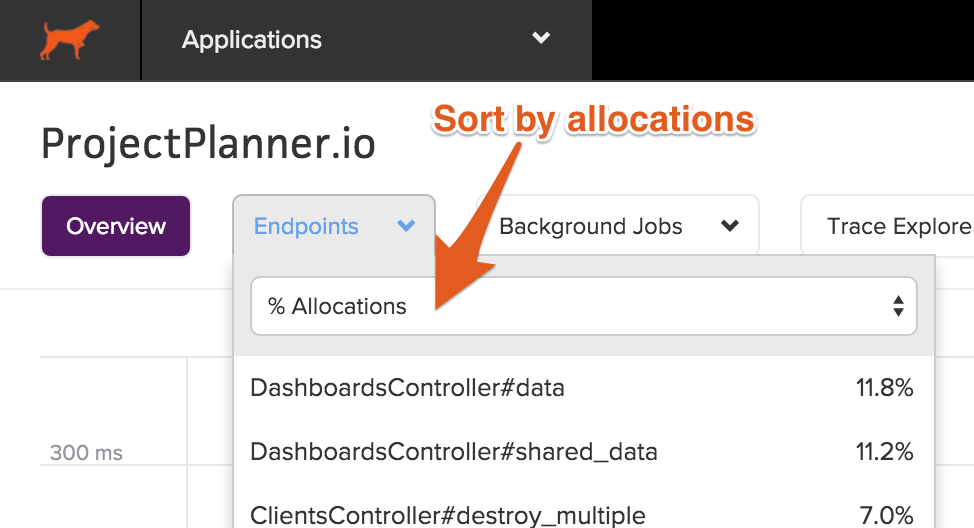

3. Sorting

You can sort by memory allocations throughout the UI: from the list of

Agent updates

To support collecting the memory-related metrics we needed in a performant manner, we utilize c-extensions for collecting allocation and memory usage metrics.

These updates should add no noticeable impact

Requirements and upgrade instructions

Memory instrumentation requires a compiler and Ruby 2.1+.

Assuming your Gemfile entry for Scout is just:

gem 'scout_apm'

Simply update your Gem version:

bundle update scout_apm

Commit and deploy.

Learning more about memory bloat

Resolving memory bloat requires a different approach than resolving timing-based bottlenecks. To help orientate you,

Try Scout today - free signup

See if Scout can help you identify memory bloat - try Scout for free, no credit card required.